States across the country are rushing to push through legislation to contain the explosion in deepfake porn amid an artificial intelligence boom.

Lawmakers are feeling the heat after more than 800 experts, celebrities, politicians, and activists signed an open letter demanding legislative action against deepfake technology that ‘poses a huge threat to society’.

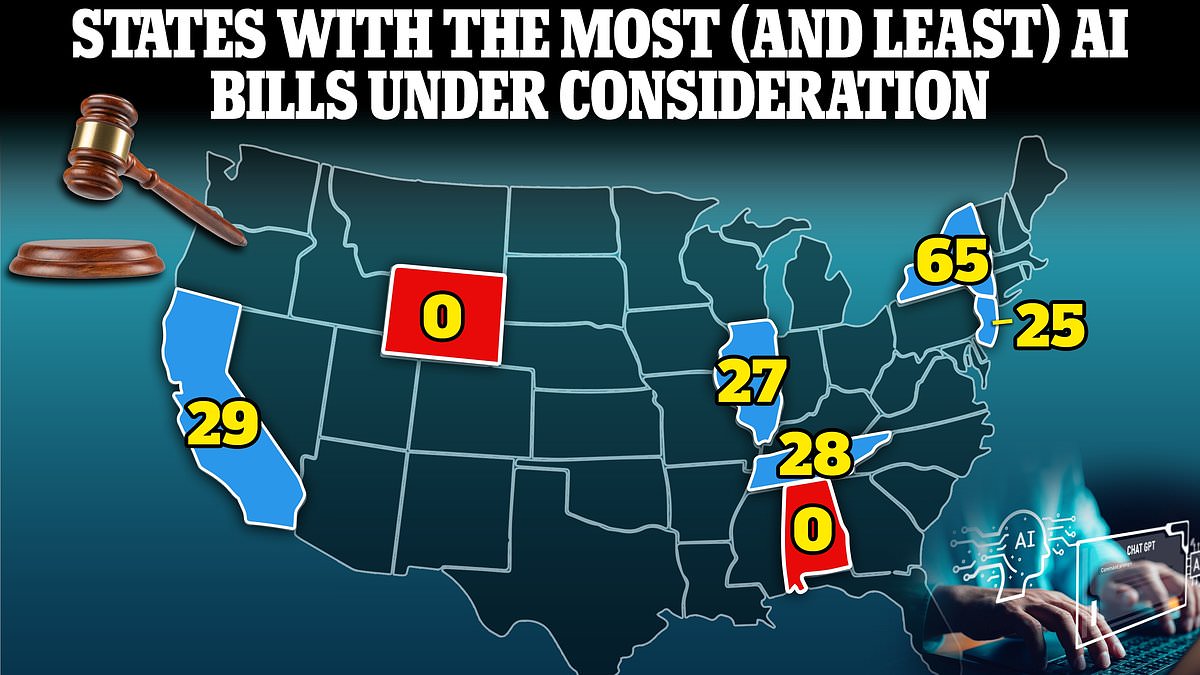

There are currently 407 AI related bills being considered across 40 states, nearly half of which address deepfakes, according to software industry group BSA.

New York is leading the way, with 65 bills currently under consideration. It is followed closely by California with 29 being debated. But others such as Alabama and Wyoming are yet to consider any new AI legislation.

Craig Albright, BSA vice president for government relations, told DailyMail.com: ‘The explosion of generative AI tools that consumers can play with themselves has placed the issues around AI front and center for millions of people, and government at all levels is trying to get ahead of the issues as best they can.

‘States are attempting to increase penalties for misuse of AI such as generating and distributing deepfake porn, or fake political videos.’.

However, he warned this is the ‘lowest hanging fruit of the AI wave that is just starting.’

There are currently 407 AI related bills being considered across 40 states, nearly half of which address the issues surrounding deepfakes

Taylor Swift was targeted by sexually explicit deepfake images that went viral on X last month

Deepfake porn crisis

The wave of proposed legislation is likely to continue, with January seeing a spike of bills, a rate of 50 per month, half of which focused on deepfakes.

Deepfake bills are the most likely to be passed. Of 37 specific deepfake focused bills proposed last year, six have so far been passed, according to analysis by Axios.

‘Penalties for deepfakes is the hot topic,’ Albright, said, ‘you have victims across the country, non-consensual intimate images have hurt a lot of people.’

Deepfake porn is AI-generated media that shows the likeness of a real person manipulated into an explicit or pornographic image or video.

The celebrity-backed ‘Disrupting the Deepfake Supply Chain’ letter argued that the proliferation of such content, is a ‘huge threat to human society and are already causing growing harm to individuals [and] communities’ Andrew Critch, AI Researcher at UC Berkeley wrote.

The letter calls for a blanket ban on deepfake technology, and demands that lawmakers fully criminalize deepfake child pornography and establish criminal penalties for anyone who knowingly creates or shares such content.

Calls for more stringent regulations come after sexually explicit deepfake images of Taylor Swift went viral on social media last month.

It is part of a wider issue where deepfake porn of celebrities has more than doubled in just a year.

Other high-profile victims include Natalie Portman, Emma Watson and Scarlet Johansson.

The majority of people targeted by deepfakes are women, with videos surfacing as early as 2018. One video targeted actress Natalie Portman

Emma Watson was yet another target of deepfake technology when a fake video of her surfaced online

Between 2022 and 2023, the amount of fake sexual content increased by more than 400 percent.

Last year more than 30 teenage girls in New Jersey fell victim to deepfake porn when their male classmates created AI generated sexual content of them and spread the images online.

Researchers told DailyMail.com ‘no one is safe’ from having their images violated.

And the explosion of deepfake porn is unlikely to slow, with OpenAI unveiling their Sora text-to-video generative tool earlier this month.

Although not yet available to the public, the tool offers hyper-realistic short videos created from any text prompt.

Marvel actress Scarlett Johansson was targeted last year when a deepfake video promoting Lisa AI surfaced.

Kristen Bell was yet another celebrity targeted by a deepfake video last year

Male students at Westfield High School in New Jersey (pictured) used an AI-powered website to generate pornographic images of their classmates

Discrimination fears

OpenAI’s high profile CEO Sam Altman recently warned that ‘subtle social misalignments’ embedded into AI could have ‘disastrous consequences.’

‘I’m not that interested in the killer robots walking on the street direction of things going wrong,’ Altman told the World Governments Summit in Dubai last week.

‘I’m much more interested in the very subtle societal misalignments, where we just have these systems out in society and through no particular ill intention, things just go horribly wrong’ he explained via virtual video link.

States such as California Massachusetts, New York, New Jersey, and Washington, are considering undertaking impact assessments to understand and limit the risks of certain types of AI.

These risks include racial discrimination subconsciously built in by engineers and early users which could pose problems if used in areas such as law enforcement software.

Connecticut passed a bill in January that requires ongoing assessments of the state’s use of AI to ensure its implementation does not cause discrimination or have a disparate impact on individual groups.

‘Connecticut is very important in wanting to take a leadership role’ Allbright told DailyMail.com.

In a similar vein, Texas has created an AI advisory council to study and monitor artificial intelligence systems developed, employed or procured by state agencies.

North Dakota, Puerto Rico and West Virginia also creating similar councils.

OpenAI’s high profile CEO Sam Altman recently warned that ‘subtle social misalignments’ embedded into AI could have ‘disastrous consequences’

‘I’m not that interested in the killer robots walking on the street direction of things going wrong,’ Altman told the World Governments Summit in Dubai last week.

Open AI’s Sora tool created this video of golden retriever puppies playing in the snow

The tool was given the prompt ‘drone view of waves crashing against the rugged cliffs along Big Sur’s garay point beach’ to create this hyper realistic video

The company has unveiled examples that are unlikely to be offensive, but experts warn the new technology could unleash a new wave of extremely lifelike deepfakes

Copyright concerns

Tennessee has moved to impose legislative AI guardrails, driven largely out of concern for its world-famous music industry.

The state’s Ensuring Likeness Voice and Image Security (ELVIS) Act was passed in January by the state’s House Banking and Consumer Affairs subcommittee in a unanimous vote.

The Act aims to prevent artists voices being used without their permission in a song they didn’t create, for example.

Other states already have legislation that prevent moves such as cloning a celebrities voice and manipulating it to advertise products without their permission.

The ELVIS act, introduced by Tennessee Governor Bill Lee, aims to specifically cover artists if their voice or likeness is used to sell fake musical output.

‘If I’m using a fake version of Drake’s voice or if Taylor Swift’s voice or of anybody else’s voice, not to sell a product, but as a fake song this bill would target that in a way that other states don’t already’ professor Joseph Fishman, a copyright and entertainment law professor at Vanderbilt University told WATE news.

President Joe Biden revealed he had viewed AI-generated video of himself, warning of potential abuse of the technology as he signed new executive actions

Threat to democracy

Around half the planet’s population will take part in an election in 2024.

In the US the presidential election is set to be particularly pivotal and already riven with issues surrounding deepfakes and false political advertising.

Earlier this month several major technology companies signed a pact to take ‘reasonable precautions’ to prevent artificial intelligence tools from being used to disrupt democratic elections around the world.

Executives from Adobe, Amazon, Google, IBM, Meta, Microsoft, OpenAI and TikTok have vowed to adopt preventative measures.

‘Everybody recognizes that no one tech company, no one government, no one civil society organization is able to deal with the advent of this technology and its possible nefarious use on their own,’ said Nick Clegg, president of global affairs for Meta, after the signing.

The accord, however, is largely symbolic and does not include a commitment to remove or ban deepfakes.

Instead it outlines various methods the signatories will use to detect and label deceptive AI content when it is created or distributed on their platforms.